|

Online Help icom Data Suite |

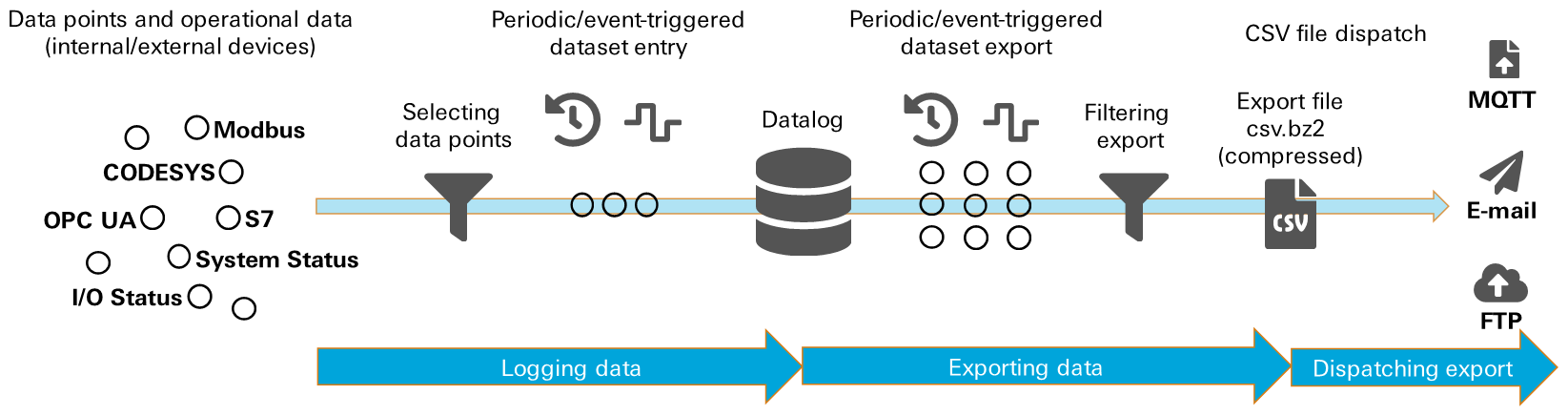

Data logger

A datalog permits periodic and event-triggered writing of selected data points of the icom Data Suite into a database. It is possible to configure several datalogs simultaneously, which write different data points into the database independent of each other. The content of the datalogs can be exported individually into CSV files. These CSV files can either be dispatched or downloaded in the icom Data Suite.

Applications

Windowing

Data are logged across a fix timespan and then discarded (FIFO buffer). In case a failure or certain event occurs, the data will be exported and saved.

Variable logging interval

For analysis purposes, it is possible to configure logging in a way that the logging frequency will be increased or a log entry (dataset) will be written in case certain (non-periodical) events occur.

Export and dispatch

The datalogs can be exported as xz-compressed CSV files regularly or triggered by certain events. These export files can then regularly or triggered by certain events be dispatched via e-mail, uploaded to an FTP server or transmitted via MQTT (not yet implemented).

Function

Selecting the entries

A dataset can be compiled for each datalog from the data points added in the icom Data Suite, operational data and system variables.

Data points can be internal data, such as I/O states, flags, router system states or results of internal computations, or read out from external devices, for example via Modbus or OPC UA.

System variables, such as location, serial number or timestamp in various formats can be included into the dataset.

The entry Causing event adds the event to the dataset, which has triggered writing of this dataset (periodic, manual action or the entered description of the event).

The entries for time and date information use the system time of the router and take the time zone setting of the icom Data Suite in account.

The correct time must be ensured in the router.

Writing the datasets

Writing will either be triggered periodically in a specified interval or using an action upon the occurrence of an event. Then, the current values at this point in time of each data point (entry) configured for the datalog will be written into the database as dataset. If data points of external devices (like Modbus devices) are contained, this is not stringently the current value in the device, but the last value from the device read in the polling interval.

Limiting the database size

In order to limit the database size, it is possible to roll it, i.e. the latest datasets will be deleted when reaching the limit. Automatic rolling can be based on achieving a count or periodically. If rolling is based on count, the oldest dataset will be deleted when reaching the configured count. If rolling is performed periodically, datasets will be deleted, if they are older than the specified duration.

Exporting the datasets

The export takes place using an action. This action can be triggered periodically using a timer or by an event. The datasets of the selected datalog will be exported into an xz-compressed CSV file with this. Each line of the CSV file consists of a dataset; the individual entries are separated by semicolons. Optionally, the exported datasets can be deleted from the database after export. The exported datasets can also be filtered. It can be filtered by count or by duration:

- Count: the specified count of the latest datasets will be exported

- Duration: the datasets that have been written in the specified time span will be exported

When modifying the configuration of the data logger (and the associated events and actions), the complete database will be exported and deleted with the activation of the profile. This export file will be marked by the string reconfigured in the file name.

Dispatching an export file with a message

The respective last export file of each datalog can be attached to an e-mail message or uploaded to an FTP server.

E-mail dispatch or FTP upload will then take place in an action.

Since more datalog export files or status log files can be selected for dispatch or upload, these will be packed in a tar file and compressed using Gzip.

An upload to an FTP server and the dispatch via MQTT message is projected.

Downloading an export file

The last ten export files are available for download in the Status menu on the Data logger page. If ten files already exist when a new export file is being created, the oldest will be deleted automatically.